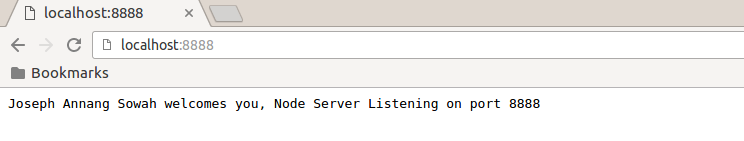

Today, I will want to delve into creating web services and accessing same from a client application.

The focus will be on SOAP-based services - REST will be dealt with at a later day.

Please download the webservice and client apps respectively from the links below:

webservice

webservice client

1. Methodology

This is a simple application that simulates a banking software's account balance request service.

The webservice will be hosted and tested on Glassfish and Wildfly(JBoss) application servers.

At the end, the webservice will be tested and a sample client app will also be built to simulate how the webservice is consumed.

A new web project will need to be created to contain the code for the webservice.

Typically a normal web project needs a web.xml file and sometimes another config file for the hosting application server (glassfish-web.xml or jboss-web.xml).

NB. The snaps will be take from a Netbeans development environment and I will begin with the Glassfish server hosting the webservice.

________________________________________________________________________________

2. Code

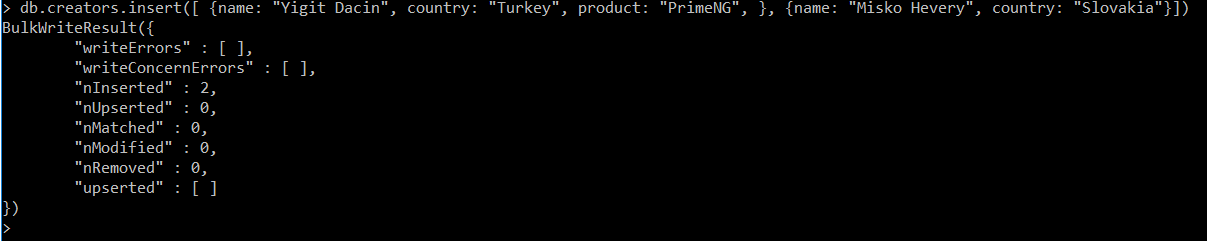

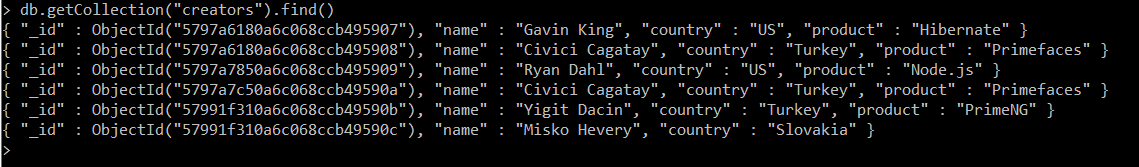

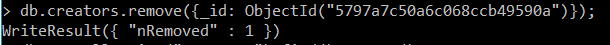

From the wsApp project, I created a webservice class called AccountWS which contains two operations: showminbal and showbal (takes 1 parameter i.e. bank account number)

package org.annangsowah.ws.app;

import java.util.HashMap;

import java.util.Map;

import javax.jws.WebMethod;

import javax.jws.WebParam;

import javax.jws.WebService;

/**

*

* @author annang

*/

@WebService

public class AccountWS {

public AccountWS() {}

@WebMethod (operationName = "showbal")

public String showBalance(@WebParam(name = "account") String account) {

//init function popoulates the the accounts map with a sample bank account 123666

init();

Object val = map.get(account);

return (val != null)? "A/C Bal is: GHS " + String.valueOf(val): "A/C Unavailable";

}

@WebMethod (operationName = "showminbal")

public String showMinimumBalance() {

return "Minimum A/C Bal is: GHS 500";

}

Map map = null;

private void init() {

long bal = 4500;

map = new HashMap();

map.put(new String("123666"), bal);

}

}

_________________________________________________________________________________

The code when compiled and executed will show the webservice operations that can be invoked by webservice clients.

_________________________________________________________________________________

3. Hosting of Webservice in Application Server

Webservices are hosted/ and served by enterprise software called Application Servers for which Glassfish, Wildfly and Microsoft IIS are examples.

A look into the current application server (Glassfish) will show the webservice running and awaiting requests ( for account balance and minimum balance)

A look into the current application server (Glassfish) will show the webservice running and awaiting requests ( for account balance and minimum balance)

________________________________________________________________________________

4. Webservice Endpoint and WSDL

Lets now generate the WSDL representation of the SOAP webservice and test our webservice

Note: The WSDL is an XML document that defines the services as a collection of network endpoints.

In simple terms, the link or URI along which the webservice can be accessed is the Service End Point(SEI). There can be multiple endpoints which may represent the different protocols along which the same webservice can be accessed.

The SOAP webservice endpoint(SEI) is http://localhost:8080/ws/AccountWSService

and the WSDL can be accessed from http://localhost:8080/ws/AccountWSService?wsdl

_________________________________________________________________________________

5. Testing the Webservice without a Client

Glassfish, as an application server, provides a simple way of testing webservices.

This is possible by adding "Tester" to the webservice endpoint i.e.

http://localhost:8080/ws/AccountWSService?Tester

1. Testing showminbal operation - expected to display an account's minimum balance

When the showminbal is invoked, the webservice response will be "Minimum A/C Bal is: GHS500" as shown below:

2. Testing showbal operation - expected to display an account's balance. Access same webservice from JBoss Wildfly Application Server:

The showbal is invoked by submitting the account number as a parameter.

Note: The account number 123666 is created in the function below

If the account entry is 123666, the webservice response will be "A/C Bal is: GHS 4500" as shown below:

Apart from account number 123666, the webservice response will be "A/C Unavailable" as shown below.

_________________________________________________________________________________

6. Access same webservice from another application server: JBoss Wildfly Application Server

This requires that the app be configured rather run on the Wildfly application server.

This is to prove that webservices run on standard protocols irrespective of the hosting container or server.

________________________________________________________________________________

7. Create Sample Webservice Client

The client is a web-page that enables the user to straightaway check the general minimum balance for all accounts. It also enables a user to check a specified account's current balance.

Sample Client Request

Response from Webservice

Below is the response from the webservice operation: Show Min Bal (showminbal)

Also below is the response from the webservice operation: Show Account Bal (showbal)

_________________________________________________________________________________

Client Design Technical Details

The client is a basic Java Server Pages web page(account.jsp) which sends a request (e.g. check balance) to a Java Servlet (AccountServlet) which further forwards it to our webservice.

The project structure is captured below:

The code for the account.jsp page is shown below.

The code for the AccountServlet class is shown below.

![[FSF Associate Member]](http://static.fsf.org/nosvn/associate/fsf-12420.png)